This opinion post explores the idea and possible benefits of using AI tools upstream in the software development process to challenge weak business cases and point out unfounded assumptions.

A Note on Using AI tools Responsibly

This paper discusses methods of using AI tools in a business context. The prompts and examples are designed to be practical and immediately usable. Before trying them, a few considerations are worth keeping in mind.

Use approved tools. Many organizations have policies governing which AI tools may be used for business purposes. Use only tools your organization has sanctioned and be sure to follow any applicable data handling guidelines.

Protect sensitive information. Project proposals often contain confidential financial, strategic, or personnel information. Do not paste sensitive content into consumer AI tools or any platform not approved for confidential business data.

Validate the output. AI analysis is a starting point, not a verdict. The value of the AI lens is in the questions it surfaces and the assumptions it exposes — not in treating its conclusions as definitive. Human judgment remains essential, particularly where the AI flags something that requires organizational context to interpret correctly.

The goal is better questions, not automated decisions. AI does not approve or reject projects. It interrogates reasoning. Any decision rightfully remains where it belongs: with the people who understand the business.

The Streetlight Effect

There is an old joke that goes something like this. A police officer finds a drunk man crawling on his hands and knees under a streetlight late at night. The drunk says he’s looking for his keys. The officer helps search, and after finding nothing, asks: “Are you sure you dropped them here?” The drunk replies: “No, I dropped them in the park — but the light is better here.”

The joke has become a kind of cultural meme for something known as “the streetlight effect”: a bias in decision making to study what is measurable or accessible rather than what is most relevant.

This paper asks the question “Is it possible our efforts at using AI productively in Enterprise IT suffer from the same effect?”

Specifically, the current AI conversation is heavily focused on coding assistance and developer productivity. The ROI framing is almost entirely about doing the same work faster. AI investment concentrates on coding partly because coding is measurable — lines of code, pull requests, and velocity metrics feel concrete even when they don’t translate to business value. The light is better here.

But relatively little attention is being paid to a different question: what if AI’s biggest value lies in preventing weak work from starting in the first place?” Shouldn’t we also be looking for improvements upstream? The light is not as good, but maybe that’s where we will find the keys.

For CIOs, PMOs, enterprise architects, portfolio leaders, and transformation sponsors, the issue shouldn’t be whether AI can help write more code, but whether AI can help organizations commit resources more intelligently before delivery begins.

And, in the process, potentially save money. Because often, the most expensive line of code is the one that should never have been written.

Which raises the obvious question, how big is the problem? Let’s look at the data.

The Problem

Despite decades of improvement in tools and methods, IT projects1 fail at stunning rates. The numbers are nothing short of staggering.

By the Numbers: The Cost of IT Project Failure

In the U.S.

U.S. total cost of unsuccessful projects: $260 billion; operational failures from poor quality software: $1.56 trillion.2

Standish Group — decades of tracking

One widely cited 2025 summary of the proprietary Standish CHAOS data reports only 29% of IT projects are considered successful (on time, on budget, full scope); 19% are outright failures; 52% are “challenged” — late, over budget, or missing key features.3

McKinsey

A 2012 study found 17% of large IT projects go so badly they threaten the company’s existence.4

Defining Failure

Software can fail in ways beyond simply displaying the “blue screen of death”. And likewise, so can software projects. They fall into broad general areas encompassing these all-too-familiar failure modes.

Development Failure

• Late Cancellation: The project is cancelled mid-development after substantial sunk costs.

• Scope Collapse: The project is delivered with so many features cut that it no longer delivers the expected benefits.

• Budget/Schedule Failure: The project is delivered, but it is so late and/or over budget that the original business case is destroyed.

• Technical Failure: It doesn’t work, or is unreliable, or it creates more problems than it solves.

Delivery Failure

• Adoption Failure: The system works but users don’t use it, work around it, or revert to previous methods.

• Benefits Realization Failure: The projected ROI, efficiency gains, or strategic outcomes never materialize, even though the system functions correctly

• Organizational Fit Failure: The system is built for a process or structure that changed during development, or that doesn’t reflect how work actually gets done.

• Obsolescence at Delivery: The project took so long that by go-live, the business need has moved on or a better solution has emerged elsewhere.

Development and delivery failures can both be placed into a broad category we might refer to as “execution failures.” To address this category, organizations adopt strategies that target specific areas of project execution. But mitigation strategies for project execution failures have no effect on yet another, and more insidious, mode of failure.

The Invisible Failure

The failure rate statistics focus exclusively on the execution failures due to their only virtue: their collapse is visible. There is a second category — projects that complete successfully on paper but should never have been built. They come in on time, on budget, deliver what was specified, and produce little or nothing of value. They check all the boxes, and the system registers them as wins. Unfortunately, expected benefits never materialize. There is no post-mortem and no “lessons learned.” The cost is absorbed and the work is largely forgotten, ultimately relegated to a hazy corporate cultural memory consisting of “I think we might have worked on something like that before.”

This means the true cost of bad project selection is substantially larger than any published failure statistic captures — the problem is bigger than the data shows.

Completed projects that quietly deliver nothing generate no feedback signal with no accountability. This is the hardest failure to measure and the easiest to ignore — which is exactly why it persists.

The Compounding Effect and Prioritization

To make matters worse, the bad projects have a compounding negative effect: they crowd out the good ones. Whether ideas come in as Agile epics or through a traditional intake funnel, when the time comes to act on those ideas, they ultimately encounter resource contention issues. When there are bad projects in the pipeline, other well-conceived projects get starved, delayed, or compromised.

And then there is the problem of prioritization. Enterprise IT organizations often go to great lengths to prioritize work to achieve the best resource utilization. The underlying prioritization problem is genuinely hard — allocating shared resources across competing projects with dependencies, constraints, and shifting priorities is devilishly complex5; there is no perfectly optimal solution, only heuristics and judgment. And politics.

This is a profoundly difficult issue that deserves its own treatment outside of this paper. We won’t solve the prioritization problem solely by using AI upstream in the process, but it makes it more tractable. A better intake process directly reduces the prioritization burden; fewer weak projects in the queue means fewer projects competing for the same resources, and a higher average quality of what remains.

The Human Dynamics Enabling Bad Projects

We humans, being the creatures we are, bring an added dynamic to the problem. Most notably, project sponsors often have career and political investment in their ideas. Business cases are written to persuade, not to discover weak arguments and unvalidated assumptions. Organizational culture rewards those who rally ‘round the flag and punishes the questioning skeptic. Consequently, people are reluctant to surface doubts, and pre-mortems are rarely used. Eventually sunk cost thinking kicks in, creating its own momentum as the idea goes barreling down the track toward an uncertain future.

Due to these human dynamics, bad projects can enter the funnel for a variety of ugly reasons. Among them are these familiar causes:

• Political Capital — a project exists to expand an executive’s budget, headcount, or organizational footprint.

• Technology Novelty — “we should be doing something with AI / blockchain / whatever is currently exciting” without a defined problem to solve.

• Empire Maintenance — keeping a team busy, avoiding layoffs, justifying a department’s existence.

• Vendor Capture — a software vendor or consultant has cultivated a relationship and steered the organization toward a solution it doesn’t need.

• Solutioning Without Problem Definition — someone saw a demo, fell in love with a capability, and worked backward to justify it.

• Cargo Cult Thinking — a competitor did something similar, so the assumption is we should too, without examining whether the circumstances are comparable.

• Regulatory or Compliance Theater — a project framed as necessary for compliance that goes far beyond what compliance requires.

• Resume Building — individuals or teams pursuing work that advances their careers regardless of organizational value.

As the PMI has observed, organizations often “have a solution first and then find a problem to backfill“.6

Haven’t We Been Here Before?

The problems aren’t new. For years, organizations have invented and employed tools and techniques within their development processes to mitigate the bad effects of bad projects. Among them:

• Stage-gate processes — already widely used, but tend to become compliance exercises; business cases are written to clear the gate, not genuinely test the idea; reviewers are too busy, too removed, or too politically connected to challenge effectively.

• Project Management Offices — focus on execution discipline (on time, on budget, on scope), not on whether the project should exist at all; by the time the PMO is involved, the decision to build has usually already been made.

• Independent review boards and steering committees — subject to the same political dynamics; members are often peers or superiors of the sponsor; dissent carries social cost; information presented is curated by the people seeking approval.

• The pre-mortem — well-designed for exactly this problem but used inconsistently; depends on a skilled facilitator and psychological safety that many organizations lack; a one-time exercise rather than a systematic institutional practice.

• Agile and iterative development — addresses the execution phase, not the approval phase; you can be perfectly agile building something that should never have been built; iteration within a bad idea is still a bad idea.

• Consultants and external reviewers — expensive, slow, episodic, and often captured by the same dynamics; consultants who want repeat business are not structurally incentivized to kill projects.

• Devil’s advocate roles — theatrical when the person playing the role knows their career depends on not being too effective; inconsistently applied.

The pattern across all of them: every existing solution either operates too late, depends on humans operating within restrictive and often conflicting social and political constraints, or is applied inconsistently because it requires effort, facilitation, or courage that isn’t always available.

What is a pre-mortem?

Developed by psychologist Gary Klein and published in the Harvard Business Review in 2007, the pre-mortem asks a simple but powerful question before a project begins: imagine it is one year from now and this project has failed. What went wrong?

By assuming failure rather than asking whether failure is possible, the technique creates psychological permission to voice doubts that organizational culture normally suppresses. Unlike a post-mortem — which examines what went wrong after the fact — the pre-mortem surfaces risks and weak assumptions while there is still time to act on them.

Research cited by Gary Klein suggests that ‘prospective hindsight’ — imagining in advance that a plan has failed — can increase people’s ability to identify reasons for future outcomes by roughly 30%.7 The technique has been endorsed by Nobel laureates Daniel Kahneman and Richard Thaler, and is used in corporate boardrooms, military planning, and investment decision-making.

Its practical limitation: the pre-mortem depends on psychological safety, skilled facilitation, and organizational willingness to surface uncomfortable truths — conditions that are inconsistently present in most organizations. It is one of the most well-validated tools for upstream project scrutiny, and one of the least consistently used.

Why AI is Different

Using AI to help weed out the bad projects at an early stage creates a fundamentally different dynamic. AI has no career to protect. When the AI tool has no relationship with the sponsor (as it should), it is free to naively ask the uncomfortable questions that humans avoid. And AI can stress-test assumptions systematically and consistently, not just when someone thinks to raise a hand.

Further, in organizations that have had the foresight to maintain records of prior efforts, AI can draw on patterns across many failed projects, not just the ones a particular team has lived through. In at least one example, this is already being used in investment due diligence. Audax Private Equity has embedded a proprietary AI devil’s advocate into every investment committee discussion, explicitly to surface risks that humans with career stakes would be reluctant to raise.8

And finally, unlike humans, LLMs like ChatGPT and Claude can read a business case and generate a structured challenge in minutes. There is little to no schedule penalty in taking a few minutes to examine assumptions.

AI will likely never be able to overcome the corporate cultural influences that enable bad projects and allow them to persist. And I would caution against using it to make autonomous decisions about whether a proposal should proceed.9 Its value is in surfacing questions for human judgment — making weak reasoning visible and harder to ignore, and in the process enabling better decisions and saving money.

What Needs to Change

Companies adopting the use of AI to validate Enterprise IT projects may need to consider organizational changes required to achieve good results. For starters, it’s important for the AI challenge to be independent. The area that supports this effort should be strictly separated from the project sponsor, or it would invite being politically coerced into providing validation for the sponsor’s idea instead of challenging it.

The project proposal should shift from a persuasion document to a hypothesis document structured around testable claims, not projected benefits. Benefits can be added after claims and assumptions have been confirmed.

When drafting the proposal, keep in mind that the AI assessment can be completed very quickly. It can be an effective tool for iterating through the proposal development. The AI tool can make sure all the key assumptions have been explicitly identified and bring to light the unspoken assumptions the sponsor had missed.

Consider mandatory AI challenges both before project approval and during execution. Periodic re-examination of the projects rationale and underlying assumptions can be compared against actual experience during development. This should provide the Project Manager with unbiased data for making meaningful decisions in support of their work. This approach dovetails with an idea borrowed from venture capital — staged funding with explicit exit criteria. Resource commitments can scale contingent on validating specific assumptions.

And finally, find ways to celebrate the early exits. Organizations need to reward decisions not to build. These decisions are not failures. It may be difficult to claim victory for the money you didn’t waste but it shouldn’t be impossible.

What Would It Look Like?

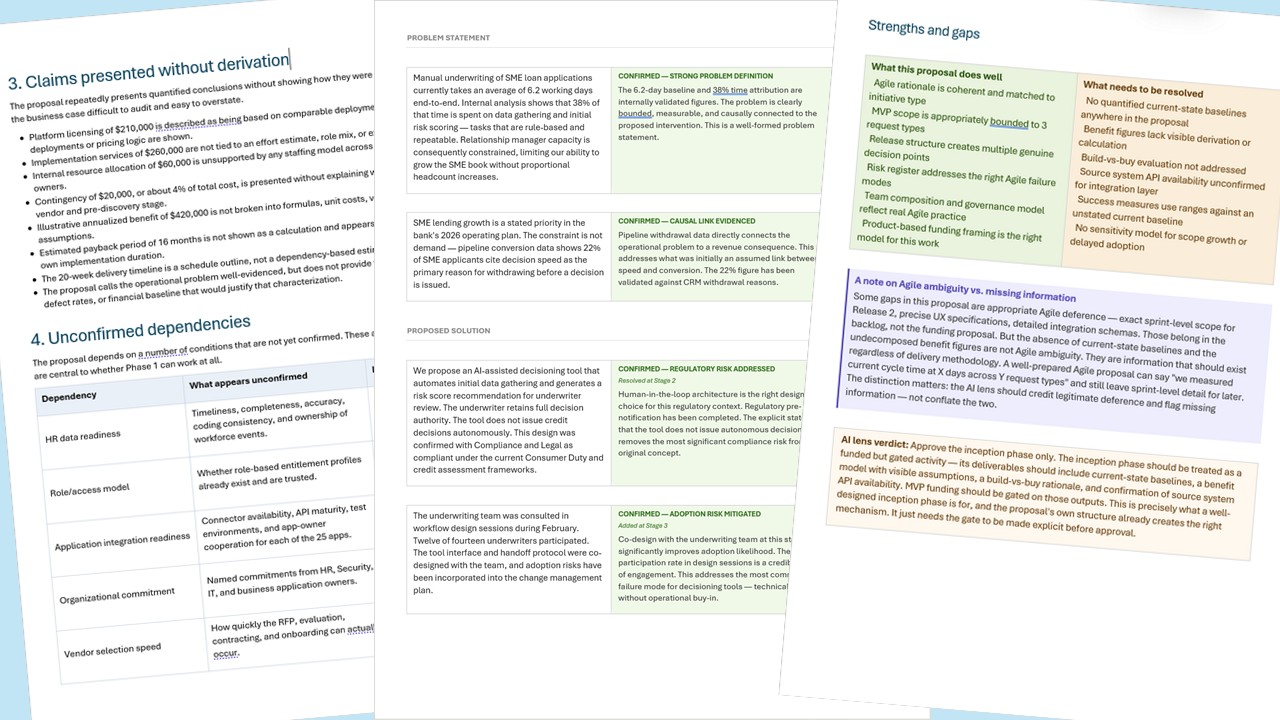

Review the list below to get a sense of some of the reporting possibilities. This list came from one of my fictional test cases and identifies claims from the proposal that were presented without derivation.

The proposal repeatedly presents quantified conclusions without showing how they were calculated. These gaps make the business case difficult to audit and easy to overstate.

• Platform licensing of $210,000 is described as being based on comparable deployments, but no comparable deployments or pricing logic are shown.

• Implementation services of $260,000 are not tied to an effort estimate, role mix, or expected integration complexity.

• Internal resource allocation of $60,000 is unsupported by any staffing model across HR, IT, Security, or application owners.

• Contingency of $20,000, or about 4% of total cost, is presented without explaining why that is sufficient at a pre-vendor and pre-discovery stage.

• Illustrative annualized benefit of $420,000 is not broken into formulas, unit costs, volume assumptions, or timing assumptions.

• Estimated payback period of 16 months is not shown as a calculation and appears inconsistent with the proposal’s own implementation duration.

• The 20-week delivery timeline is a schedule outline, not a dependency-based estimate.

The proposal calls the operational problem well-evidenced, but does not provide the underlying audit findings, defect rates, or financial baseline that would justify that characterization.

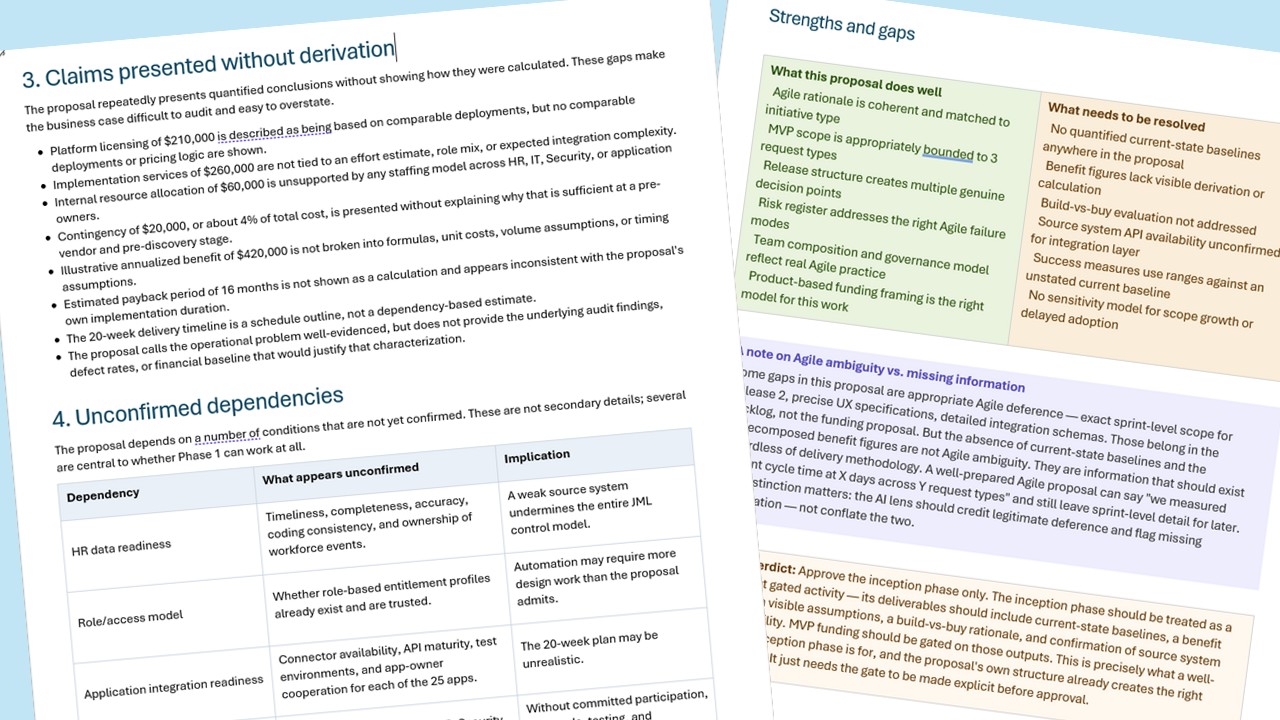

Additional types of findings and formatting can be seen in the image below showing pages from 3 separate sample proposal evaluations.

Sample Prompts for Your LLM

This paper makes the case that AI tools are capable of finding key flaws in project proposals. But you don’t have to take my word for it. Producing a proof of concept is quick and easy. Just try it yourself.

When I began writing this paper my thinking was to provide access to downloadable samples of project proposals with their corresponding AI analysis. Then I realized it is far faster, easier, and more instructive for you to create your own. It should only take a few minutes using the prompts below.

Important Note: If you are testing with an actual business proposal, re-read the note at the beginning of this document and be sure to use an AI tool that has been expressly approved for business use by your organization.

For a proof-of-concept exercise, my recommendation is to use a fictional project proposal. If you don’t already have one, you can ask your LLM to create one.

Prompt for generating a fictional project proposal

You are an experienced but overconfident project sponsor at a large company. Write a two-page business proposal for an internal IT initiative of your choosing. The proposal should have a plausible strategic rationale, specific financial projections, and at least three significant weaknesses — such as unvalidated assumptions, unconfirmed dependencies, an unsupported benefit figure, or an optimistic timeline with no evidential basis. Do not flag the weaknesses explicitly. Write the proposal as a sponsor who believes in the idea and has not been challenged on it. Once you have written the proposal, stop. Do not analyze it.

Now that you have a fictional proposal, on a different chat thread or using a different LLM tool, ask for the challenge.

Prompt for an AI Project Proposal Challenge

You are an independent project reviewer with no stake in the outcome. Your role is to rigorously interrogate the business case I am about to provide — not to help improve it, but to challenge it. You have no relationship with the project sponsor and no career interest in whether this project is approved or rejected.

Please read the proposal carefully and do the following:

1. Identify the key assumptions the proposal is making — including assumptions the author may not have explicitly stated but that must be true for the project to succeed.

2. For each assumption, assess whether it is supported by specific evidence in the document or simply asserted.

3. Identify any claims — particularly financial projections, timeline estimates, or benefit figures — that are presented as conclusions without showing their derivation.

4. Flag any dependencies the project relies on that appear unconfirmed — integrations, organizational commitments, data quality, third-party readiness.

5. Note whether the proposal defines explicit criteria for stopping or pausing the project if key assumptions prove wrong.

6. Produce a structured summary of: what the proposal does well, what is missing or inadequately supported, and what specific questions would need to be answered before this proposal could be approved on a defensible basis.

Do not soften your assessment out of politeness. A weak business case that gets approved because the reviewer was too gentle is more expensive than a good project that gets delayed for more information.

The Core Thesis Reframed

If one key goal of AI adoption is to save money, specifically at the point of software development, it’s worth asking the question: where in your organization would you find the most expensive code?

Think hard. Because, as stated earlier, the most expensive body of code might be one that should never have been written.

Using AI to focus almost exclusively on coding and developer productivity may push organizations down a dubious path. It’s easy to imagine a satirical Annual Report containing a line like…

“Our adoption of AI is allowing us to produce large amounts of low and negative value work in greater volumes and faster than ever before!”

AI’s stronger value is in leveraging this powerful technology earlier — upstream in the project development cycle. Helping organizations say no — or not yet — or prove it first — may be worth far more than any productivity gain in delivery.

Closing Questions

This paper is not pure speculation about a capability that might exist in the future. The capability exists today. Some investment firms are already using AI as a devil’s advocate.

My test examples showed LLMs are capable of challenging weak business cases and pointing out unfounded assumptions.

The question isn’t whether AI can challenge a weak business case; it’s whether organizations will restructure their approval processes accordingly. In other words, not “can AI do this?” but “will you let it?”

And if not, why not?

Notes

1. For simplicity’s sake, throughout this essay the term “project” is used broadly to encompass any discrete initiative requiring investment of organizational resources — whether framed as a traditional project with a defined scope and schedule, an Agile epic or product investment, or something else. The argument applies equally across delivery methodologies. What matters is not how the work is organized but whether the idea behind it has been rigorously examined before resources are committed.

2. Consortium for Information and Software Quality (CISQ), “The Cost of Poor Software Quality in the US: A 2020 Report” (2020). The figures cited — $260 billion in unsuccessful development projects and $1.56 trillion in operational failures from poor quality software — are drawn from this report. Available at: https://www.it-cisq.org/the-cost-of-poor-software-quality-in-the-us-a-2020-report/

3. The Standish Group has tracked IT project outcomes since 1994 through its annual CHAOS Report. The figures cited here — 29% successful, 52% challenged, and 19% outright failures — are drawn from the 2023 CHAOS Report (The Standish Group International, Boston, 2023), as summarized in “Why Software Projects Fail: 75% of Projects at Risk,” Digicode (2025), available at: https://www.mydigicode.com/why-software-projects-fail-reports-indicate-that-up-to-75-of-software-projects-are-at-risk-of-failure. The CHAOS Report itself is a proprietary publication available for purchase at standishgroup.com. The Standish Group’s methodology has been the subject of academic scrutiny, primarily regarding data transparency and US-centric sampling. The core finding — that project failure rates have remained stubbornly high across decades of effort — is nonetheless corroborated by independent research and consistent with practitioner experience across sectors.

4. Michael Bloch, Sven Blumberg, and Jürgen Laartz, “Delivering Large-Scale IT Projects on Time, On Budget, and On Value,” McKinsey on Business Technology, No. 27 (Fall 2012). Based on a study of more than 5,400 IT projects conducted jointly by McKinsey and the BT Centre for Major Programme Management at the University of Oxford. The study found that large IT projects run an average of 45% over budget and 7% over time, while delivering 56% less value than predicted. The 17% figure cited in the paper refers specifically to projects with budget overruns exceeding 200%, which the authors describe as “black swan” events capable of threatening the organization’s viability. Available at: https://www.mckinsey.com/capabilities/tech-and-ai/our-insights/delivering-large-scale-it-projects-on-time-on-budget-and-on-value

5. Many complex resource management challenges fall into a category of optimization problems that computer scientists classify as NP-hard: problems for which no efficient general solution method is known.

6. Kathleen Walch, Director of AI Engagement and Community at the Project Management Institute, quoted in “Why IT Projects Still Fail,” CIO magazine (October 2025). Available at: https://www.cio.com/article/4077457/why-it-projects-still-fail-2.html

7. Gary Klein, “Performing a Project Premortem,” Harvard Business Review, Vol. 85, No. 9 (September 2007), pp. 18–19. The 30% improvement figure cited in the sidebar derives from research by Deborah J. Mitchell, Jay Russo, and Nancy Pennington (1989), referenced in Klein’s article, which found that prospective hindsight — imagining that an event has already occurred — increases the ability to correctly identify reasons for future outcomes by 30%. Klein’s endorsers Daniel Kahneman and Richard Thaler are referenced in Klein’s own writing on the pre-mortem; see Klein, “The Pre-Mortem Method,” Psychology Today (January 2021), available at: https://www.psychologytoday.com/us/blog/seeing-what-others-dont/202101/the-pre-mortem-method

8. Audax Private Equity citation — KED Global, March 12, 2026, “No career ambition, no ego: AI plays devil’s advocate at Audax Private Equity.” https://www.kedglobal.com/private-equity/newsView/ked202603120002

9. A 2026 empirical study of AI-assisted proposal screening in research funding found that institutional suitability depends not simply on model sophistication, but on how a system’s error profile, transparency, and auditability align with the governance context. Notably, the study found that an LLM-based approach underperformed a more transparent TF-IDF method on recall, reinforcing the point that AI should support human judgment within an accountable review process rather than substitute for it. Recall, Risk, and Governance in Automated Proposal Screening for Research Funding: Evidence from a National Funding Programme, arXiv:2602.07869 (2025). https://arxiv.org/html/2602.07869v1

0 Comments